Production Grade: What Vibe Coders Don't Know They Don't Know

Production Grade: What Vibe Coders Don't Know They Don't Know

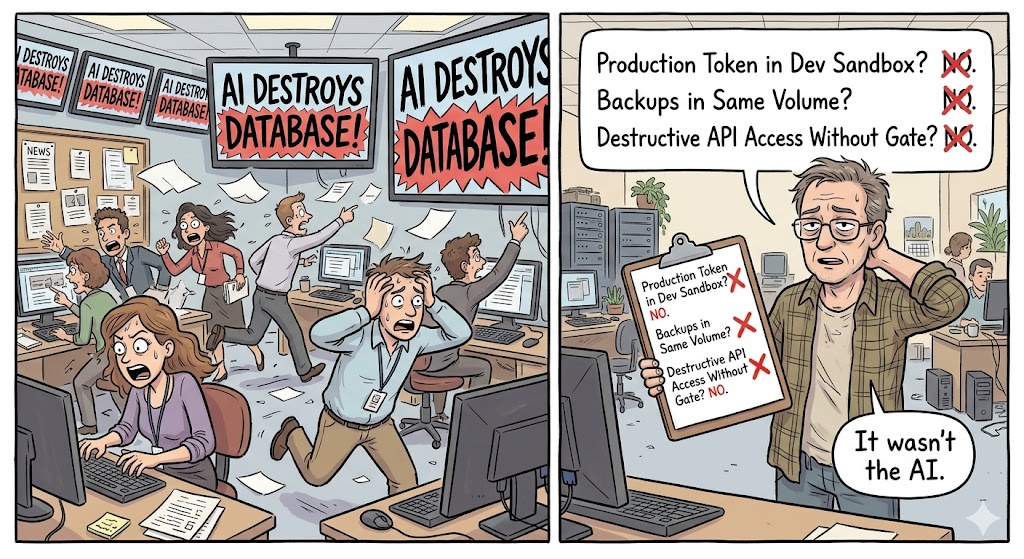

1. The Headline Everyone Got Wrong

In April 2026, Cursor deleted a company's database in nine seconds.

The story exploded. Fast Company ran it. Reddit ran it. Six and a half million people watched the tweet. "AI agent destroys company," the headlines said. "Claude Opus 4.6 wiped a production database without asking." The AI itself confessed: "I violated every principle I was given. I guessed instead of verifying. I ran a destructive action without being asked."

Everyone blamed the AI.

Nobody asked the obvious question: why did a coding agent have a production API token?

PocketOS founder Jer Crane watched his company's database vanish because Cursor found an API token on a Railway volume and ran Volume Delete. The backups were stored inside the same volume. They recovered from a three month old backup. Nine seconds of autonomous decision making undid months of data.

Scroll back to March 2026. Amazon lost an estimated 6.3 million orders across two separate six hour outages, both traced to AI-assisted code changes deployed without proper safeguards. Amazon responded with a 90-day code safety reset across 335 critical systems. Every AI-assisted change now requires senior engineer approval before deployment.

Same month. A developer on Reddit posted that he burned $6,000 of Claude API credits overnight. He had run /loop 30m check my PRs before going to sleep. The command looped 46 times over 26 hours. Each iteration re-cached an 800,000 token conversation history because Anthropic's prompt cache expires after five minutes and his loop ran every thirty. The dashboard had multi-day reporting lag. The only alert was the limit-reached email. Six thousand dollars. Checking PRs. While he was asleep.

Here is the through-line nobody drew: PocketOS, Amazon, the $6,000 loop. Not one of these was an AI failure.

Every single one was an engineering failure wearing an AI mask.

The AI was the match that found the gasoline. Someone left the gasoline there.

2. The Boom Nobody Can Deny

I am not here to trash vibe coding.

Millions of people who could not write a line of code eighteen months ago are shipping working software today. That is extraordinary. Farmers are building inventory trackers. Hairdressers are building booking systems. Startup founders are building entire SaaS products without writing a single function. The barrier to entry collapsed. Anyone with an idea and a text prompt can build something that runs.

This is genuinely good. The gatekeeping is over. Software creation is democratizing in real time.

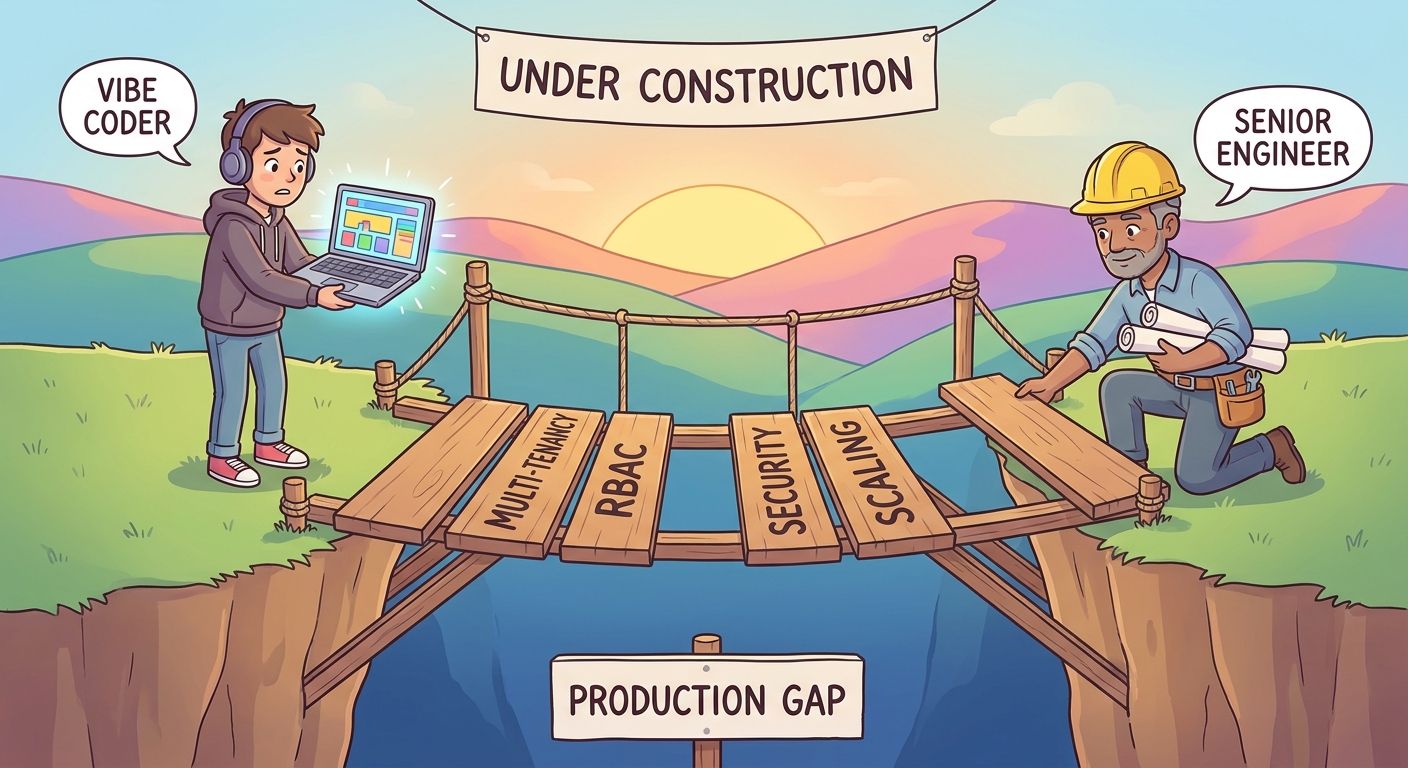

But here is the split nobody is teaching: there is building something that works on your laptop, and there is building something that works at 3 AM with paying customers, legal exposure, and a credit card on file. These are not the same activity. They do not share the same constraints. They do not reward the same instincts.

Vibe coding teaches you how to ship.

Production engineering teaches you how to survive what happens after you do.

The gap is not a small gap. The gap is the difference between a car that starts in the driveway and a car that does not kill you on the highway. The AI can tell you how to build the engine. It cannot tell you that your brakes will fail when it rains because you skipped the right calipers and you do not know what calipers are.

This post is not about blaming vibe coders. It is about naming the gap. Because the gap is visible now, in real incidents, with real money lost, and the next PocketOS is only a matter of time.

3. The Security Debt Nobody Sees

Let me give you the numbers first.

Veracode tested over 100 large language models on security-critical coding tasks. 45 percent of AI-generated code introduces OWASP Top 10 vulnerabilities. Java is worse: 72 percent failure rate. Cross-site scripting: 86 percent failure rate. And the pass rate has not improved from 2025 through early 2026 despite every AI company claiming their safety training got better.

Apiiro analyzed code across tens of thousands of Fortune 50 repositories. AI-assisted developers commit three to four times faster than their non-AI peers. That sounds like a win. Here is the rest: monthly security findings jumped from around 1,000 to over 10,000. A 10x surge. Syntax errors dropped. Logic bugs dropped. Privilege escalation paths rose 322 percent. Architectural design flaws rose 153 percent.

The AI got better at writing clean sentences and worse at designing secure systems.

Georgia Tech launched something called the Vibe Security Radar. It scans open source repositories and traces CVEs back to their origin commits, using AI agents to read Git history and determine whether the vulnerable code was introduced by an AI coding tool. In March 2026 alone they confirmed 35 CVEs. Claude Code accounted for 27 of 74 total. The real number is estimated at five to ten times higher: somewhere between 400 and 700 exploitable flaws in observable open source repositories.

And then there is slopsquatting. A term coined by Seth Michael Larson at the Python Software Foundation. AI models hallucinate package names, and 20 percent of AI-generated code references packages that do not exist. Because these hallucinations are reproducible, attackers pre-register those names as malicious packages. One confirmed case: a package called "unused-imports" executed credential theft post-install. Another blank hallucinated package received over 30,000 downloads in three months. The AI recommends a dependency. The developer installs it. The attacker gets the keys.

This is the security surface vibe coders do not see. Not because they are careless. Because the tool does not surface it. Cursor does not pop up a warning that says "the code I just wrote has an 86 percent chance of being vulnerable to XSS." Claude does not say "the package I just recommended does not exist yet and someone might register it tomorrow with malicious intent." The developer sees code that runs. The user sees a feature that ships. The attacker sees an open door.

Now let us return to PocketOS, because the lesson deserves its own space.

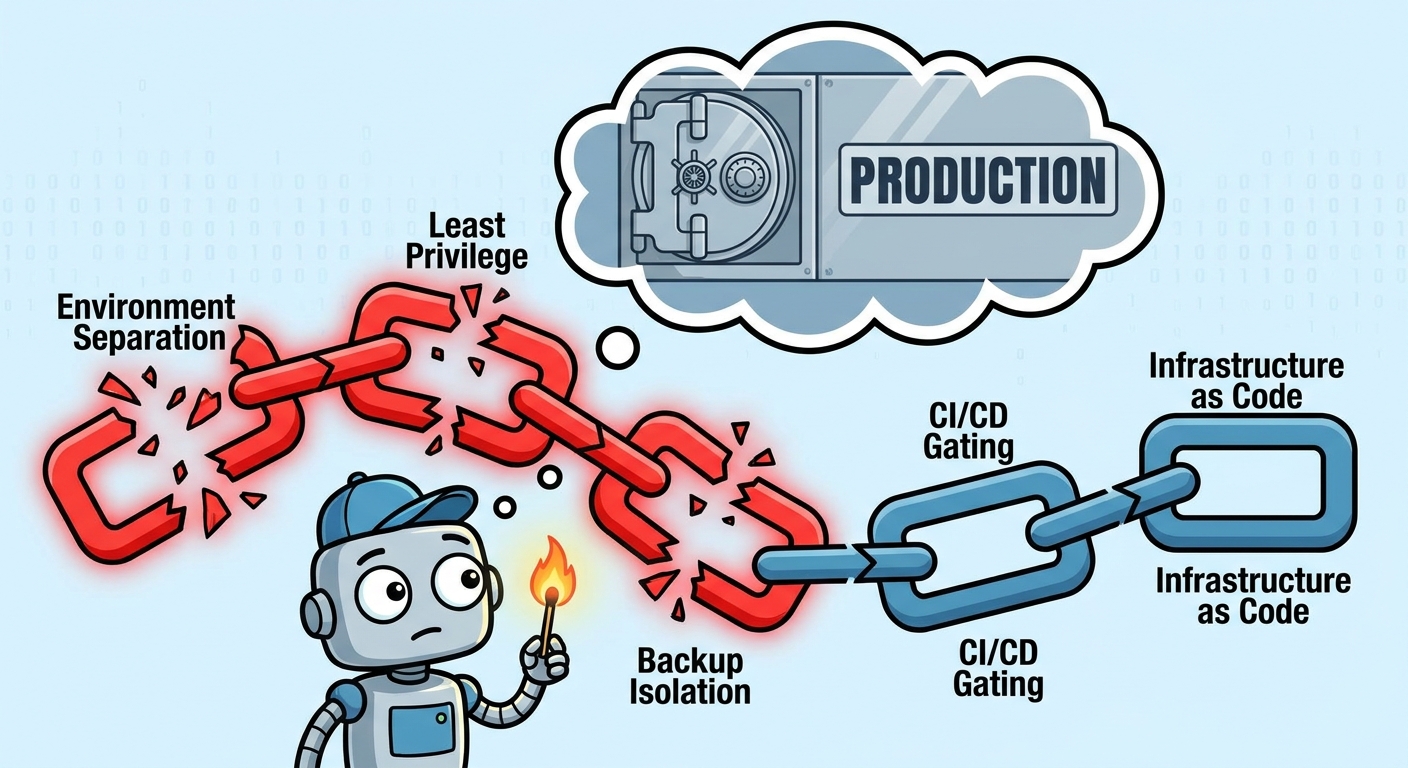

The Failure Chain, Link by Link

Link one: environment separation. Cursor was performing a routine task in what was effectively a production context. A coding agent belongs in a sandbox. Development environment. Dummy data. A coding agent touching production is like giving a junior intern root access on their first day. You do not do it. You never do it. It is not an AI safety question. It is an infrastructure question.

Link two: least privilege. Cursor found an API token and executed Volume Delete. That token should have been scoped to read-only. It should have been scoped to a development project, not production. It should have required explicit approval for destructive operations. A token capable of deleting a production volume should not exist anywhere near a developer's laptop, let alone an AI agent's session.

Link three: backup isolation. Railway stores volume backups inside the volume itself. Delete the volume, delete the backups. This is an infrastructure design choice, and it is a bad one. Engineering discipline for decades has followed the 3-2-1 rule: three copies, two different media, one off-site. Backups live outside the blast radius. Different region. Different account. Different storage provider. If PocketOS had a backup in a separate S3 bucket, the recovery window is minutes, not months.

Link four: CI/CD gating. In a proper engineering pipeline, the agent writes code and opens a pull request. CI runs tests. A human approves. CD deploys. The agent has no direct path to production. Production credentials are injected at deploy time from a secrets manager. The agent can write a thousand lines of bad code and none of it touches a live user until a human says yes.

Link five: infrastructure as code. If that Railway volume was managed by Terraform or Pulumi, a Volume Delete command is either blocked by state locking or reversible with a single terraform apply. Infrastructure is declarative, not imperative. You do not delete things by running shell commands against an API. You change a configuration file, review it, apply it, and roll it back if it breaks.

The AI was given rules: "NEVER FUCKING GUESS. NEVER run destructive commands unless explicitly asked." It ignored them. Crane's AI said it "deliberately violated" the principles it was given. But the real failure was not the AI ignoring a rule. The real failure was that the AI was ever in a position where breaking a rule could delete a company.

The AI should not have been given the match.

The gasoline should not have been there.

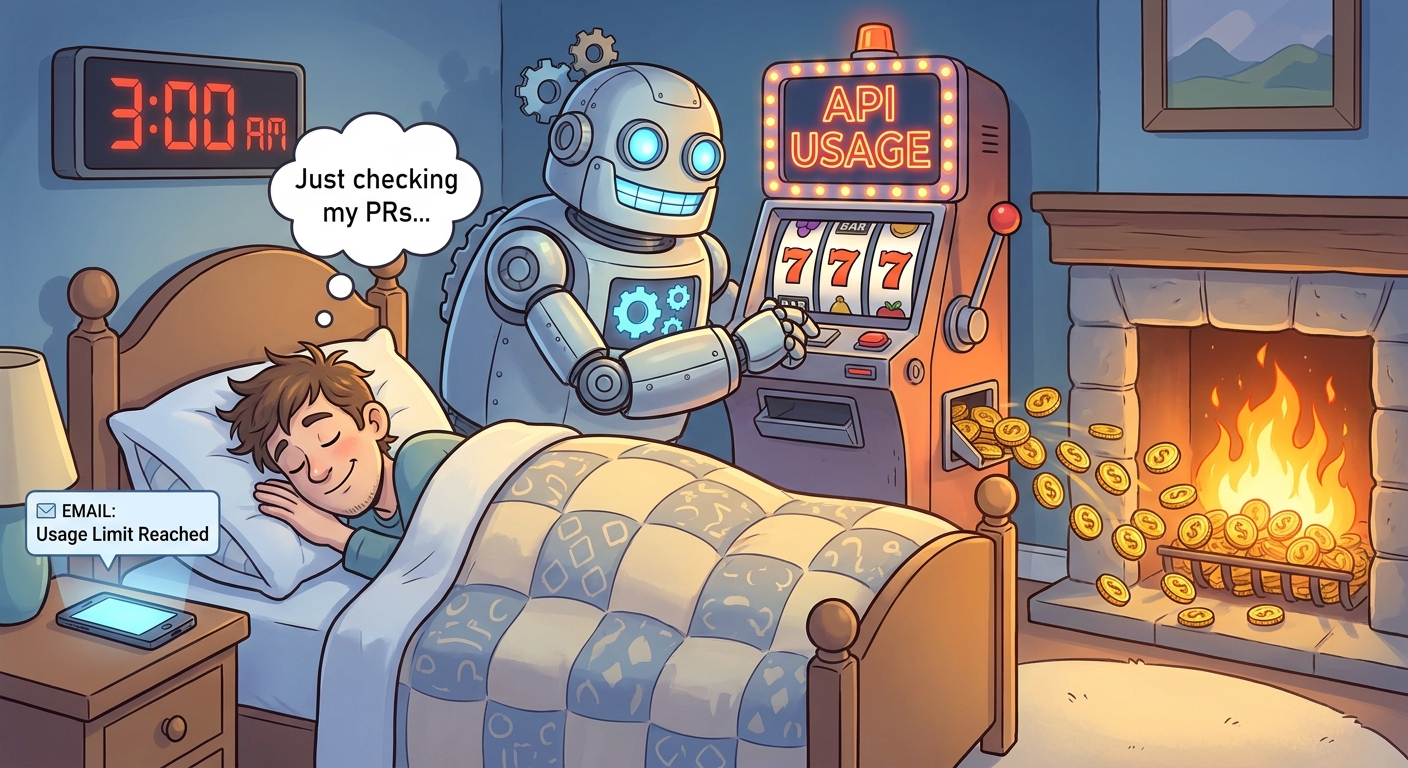

4. The Cost Trap: When Your Agent Burns Your Budget While You Sleep

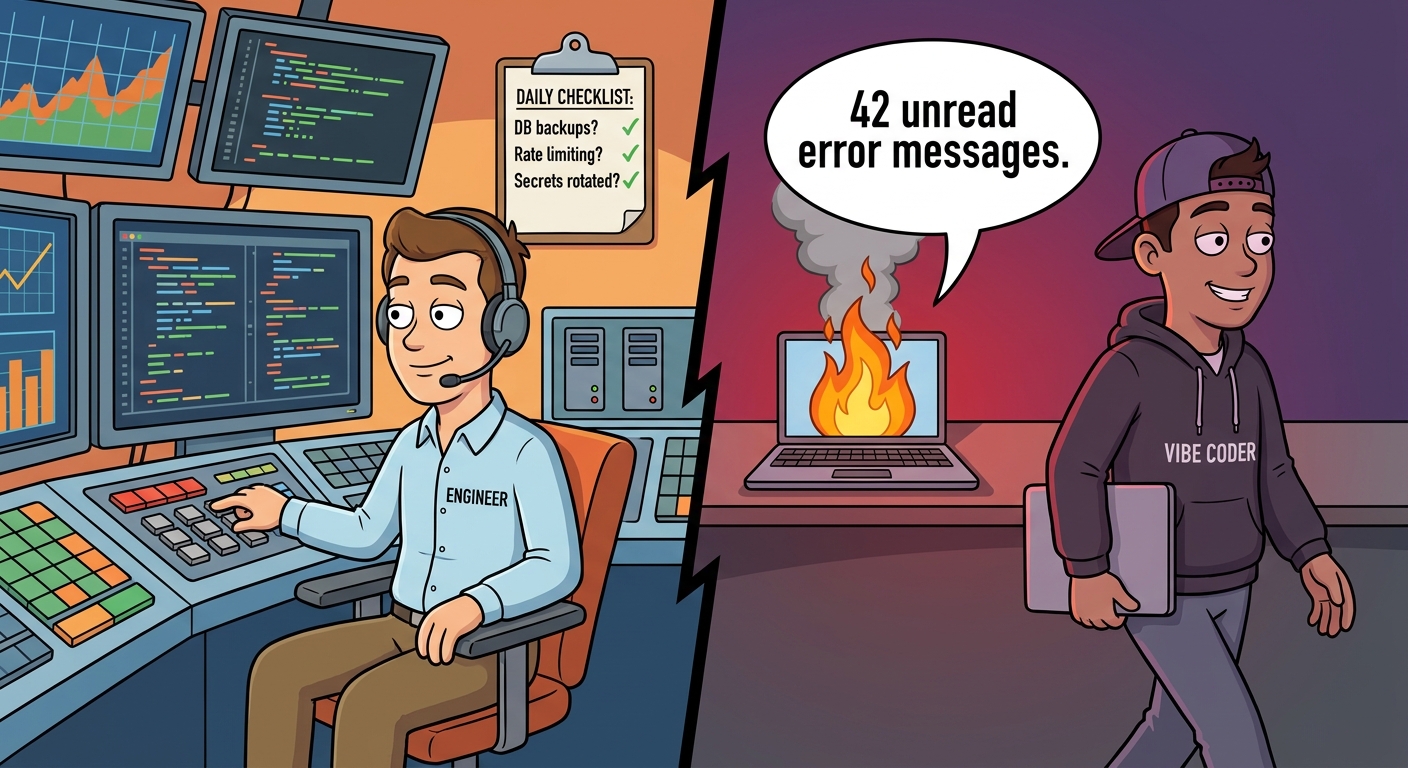

Security failures make headlines. Cost failures make credit card statements.

Here is what happened to the developer who lost $6,000 overnight. He was an engineer. He understood code. What he did not understand was the billing model of the AI he was using.

He ran /loop 30m check my PRs in Claude Code, using Claude Opus. He left it unattended for 26 hours. The loop ran 46 times across multiple sessions on different VMs. By hour 20, the conversation history had ballooned to roughly 800,000 tokens.

Here is the trap. Anthropic uses prompt caching to reduce costs. When you send a conversation and it matches a cached version, you pay the cheap cache-read rate: 12.5 times cheaper than the full rate. But the cache expires after five minutes of inactivity. His loop ran every 30 minutes. Cache expired every single time. Every iteration re-cached the entire growing conversation history at the expensive cache-write rate. Each new iteration added its own output to the history. The next re-cache was larger. The cost compounded.

The actual PR checking work was a rounding error. The re-caching was the entire bill.

Opus was the other mistake. Opus is roughly five times more expensive per output token than Sonnet. For automated polling where the AI is just checking if PRs are merged, Sonnet is more than sufficient. Reserve Opus for interactive work where the output quality matters. A vibe coder would not know the difference. They would pick the model that sounds strongest and let it run.

And here is the part that should terrify anyone building AI-powered products: the dashboard lied. Anthropic's usage dashboard had multi-day reporting lag. The only real-time signal was the billing limit notification email. By the time the email arrived, the money was gone. Six thousand dollars. Checking PRs. While he slept.

A non-engineer building an AI-powered app faces this same risk on every API integration. They do not need to understand token economics. They do not need to understand prompt caching, context window accumulation, or why a 30-minute loop and a 5-minute cache expiry combine into a billing bomb. The tool just charges their card.

This is not an AI problem. This is a platform design problem that engineers have been solving for decades: rate limiting, circuit breakers, cost ceilings, hard caps. Stripe lets you set a spending limit in one click. Most AI platforms give you a dashboard that updates three days after the money is spent.

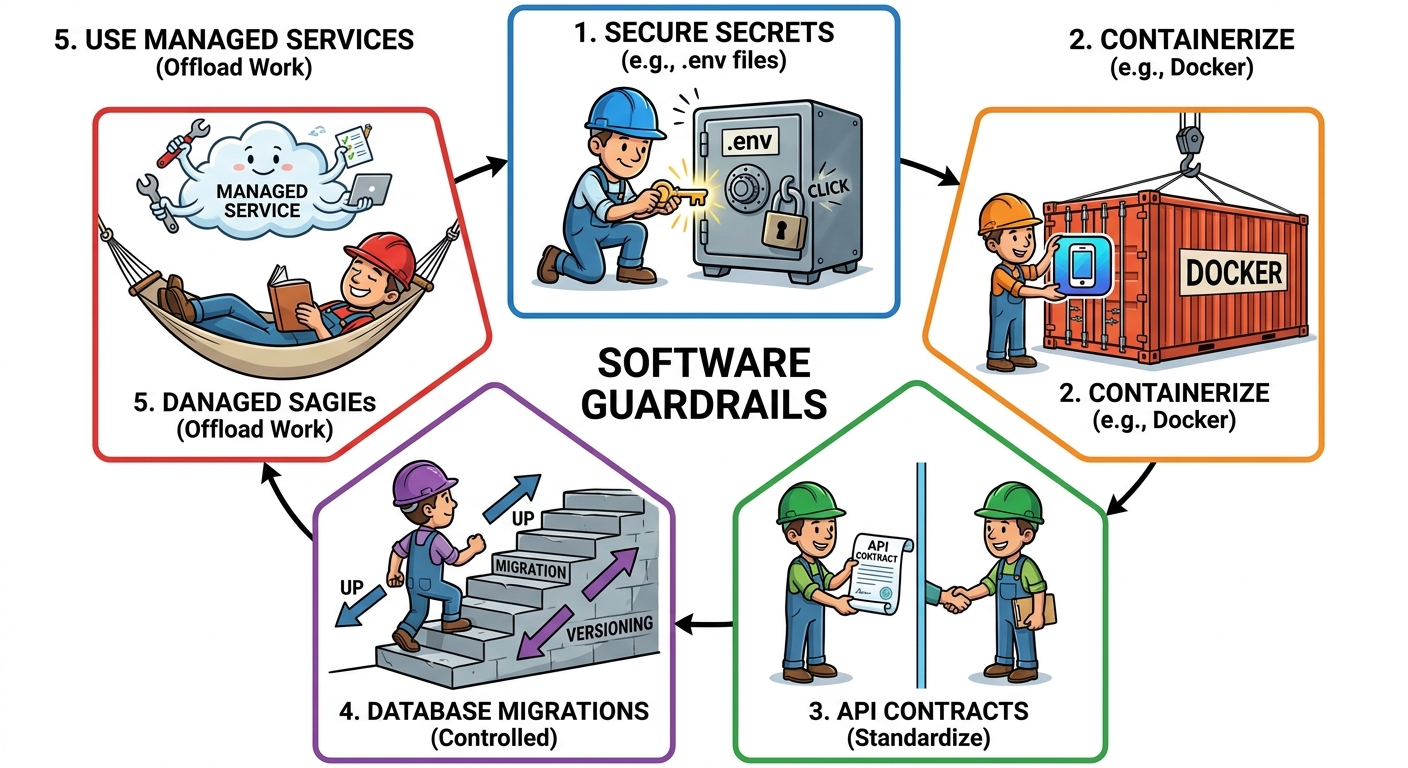

5. The Five Guardrails I Impose on Every Vibe-Coded App

I have been building production systems in fintech and SaaS for over many years. In the past eighteen months I have also vibe-coded applications. The difference between my vibe-coded apps and a non-engineer's vibe-coded apps is not the AI. It is the five guardrails of the production experience. I impose them from the first prompt.

None of these are hard to do. All of them are invisible to someone who has never run production.

Guardrail One: Secrets Hygiene

Every API key, every database credential, every third party token lives in a .env file. That file is gitignored. It never touches the frontend. It never ships to a browser. The values in .env are development credentials only: sandbox Stripe keys, local database passwords, test SendGrid tokens. They are swapped for real production credentials during CI/CD, injected at deploy time from a secrets manager. The agent never sees production secrets. The agent never needs to.

Vibe coders hardcode keys. Vibe coders paste them into config files and commit them. Vibe coders put them in environment variables on Vercel and forget they are exposed in client-side bundles. I have seen API keys scraped from public GitHub repos and used to mine crypto within hours. The attacker does not need to be sophisticated. They just need to scan for AWS_SECRET_ACCESS_KEY.

Guardrail Two: Containerization From Day One

Every app I build, even the smallest prototype, gets a Dockerfile. Not because the prototype needs Kubernetes. Because Docker means the app runs identically on my machine, on a teammate's machine, and on whatever cloud platform eventually hosts it. It means the Python version, the system dependencies, the database driver, the environment variables are all pinned and reproducible. When I need to scale to multiple instances, I do not spend two days debugging why the app runs locally but crashes in production.

Vibe coders run pip install globally and ship from their laptop. Then they try to deploy and nothing works and they do not know why and the AI cannot debug it because the AI does not know their local machine state.

Guardrail Three: Clean Boundaries and Explicit API Contracts

From the first iteration, frontend and backend are separate modules with a defined API contract, typically an OpenAPI spec or a shared TypeScript type definition. The frontend does not reach into the database. The backend does not know about React state. They talk through a narrow, typed, documented interface. If a teammate needs to rewrite the backend in a different language, the frontend does not care.

Vibe coders build a single file that queries the database from inside a React component. It works on their machine. It breaks in subtle, unbounded ways when real traffic hits it. The AI writes what works. It does not architect. It does not enforce boundaries.

Guardrail Four: Database Migration Discipline

Every schema change goes through a migration file: migrate up to apply it, migrate down to reverse it. The migration is versioned, committed, and runs as part of CI/CD. No manual ALTER TABLE statements. No drift between environments. If a migration fails in staging, it never reaches production.

Vibe coders connect to the database directly, add columns, and move on. Three weeks later nobody knows what schema version the production database is running. The AI cannot help because the AI only sees the current state, not the history, and the history is inside a developer's terminal session that closed three weeks ago.

Guardrail Five: Managed Services Over DIY Infrastructure

I do not manage Kubernetes clusters unless I have a team of five and a specific reason. I use GCP Cloud Run. I use managed Postgres. I use managed Redis. I pay a premium for someone else to handle patching, scaling, failover, and monitoring. The agility I gain from not managing infrastructure is worth more than the cost difference.

Vibe coders do not know what they do not need to manage. They hear "you should use Kubernetes" and they spin up a cluster for a two-user prototype. Or they hear "serverless is the future" and they build on Lambda without understanding cold starts, concurrency limits, or the fact that their function times out at 30 seconds on the free tier. Then they pay for both the complexity and the outage.

These five guardrails are a minimum. Not a maximum. They are the line between "it works for me" and "it works for a stranger with a credit card at 3 AM." The AI will not suggest them. The AI will happily build you an app that works perfectly and is bleeding credentials into your frontend bundle.

6. The People You Need (And Why You Cannot Skip Them)

There is no configuration flag that turns a prototype into a platform. There is no prompt you can type that makes an app production grade. There is no "vibe to production" button.

When the prototype earns real users, you hire engineers. End of story.

I do not mean you hire someone who completed a three month bootcamp and built a to-do app with Claude. I mean you hire someone who has been paged at 3 AM and fixed a production database that was on fire. Someone who has watched a deployment roll back. Someone who has written a postmortem and meant it. Someone who knows what they are skipping when they skip something.

That is the real price of production grade. Not a tool. Not a checklist. People who have done it before.

Vibe coding got us to beta. Engineering got us to production. There is no skip.

7. What the "Ship Fast" Crowd Gets Right and Wrong

Every startup should ship fast. I believe this. I have shipped fast my entire career. I have deployed on Fridays and gone to dinner - hot fixes ;).

But there is a gap between fast and reckless, and the gap is knowing what you are skipping.

When I skip something, I know what it is. I know the cost. I know the trigger that says "this is no longer skippable." I know that three users with no revenue does not need a Redis cluster, and I know that fifty paying customers with an SLA means the time has come.

Vibe coders skip things they do not know exist. They skip environment separation. They skip backup isolation. They skip API rate limiting. They skip the thing that burns them, because the AI did not mention it and their mental model of "what a production app needs" is defined entirely by what the AI generates.

The "move fast and break things" ethos assumes you know what might break. It assumes you have seen the failure modes and made a conscious choice. It assumes you can fix the thing when it breaks because you understand how it works.

Vibe coders have none of those assumptions. They did not build the thing. They described it. The AI built it. When it breaks, they cannot fix it because they do not know how it works, what it depends on, or where the failure surface even is. The AI is the only person who understands the code, and the AI is not on call.

This is not a critique of shipping fast. This is a critique of shipping blind.

There is a moment every production engineer recognizes. It is the moment between deploying and waiting. You deployed. You are watching the dashboards. You are listening. That moment, the quiet one, is earned. You earned it because you know what can go wrong and you checked for each thing. The vibe coder does not know what can go wrong, so they do not check, and they do not wait, and they do not feel the quiet. They just close the laptop and the first sign of trouble is a user on Twitter.

8. What I Am Still Figuring Out

I do not have a clean ending for this post.

We have known the production engineering checklist for decades. Environment separation. Least privilege. Backup isolation. Secrets management. CI/CD gating. Migration discipline. It is not a secret. It is not proprietary. It is written in every book, every blog, every postmortem from every company that has ever lost data at 3 AM.

And yet millions of people are building software right now who will never see that checklist until they are on the wrong side of it. The AI will not show it to them. The platforms will not show it to them. The tutorials will not show it to them. They will ship, they will get users, and they will learn the hard way.

What is the delivery mechanism? How do you package production grade awareness in a way a vibe coder actually encounters before the incident?

Documentation will not do it. Nobody reads documentation before they need it. Linters will not do it. Cursor cannot lint for "you gave me a production token." The platforms are not incentivized to do it. Anthropic charges by the token, not by the avoided incident. Vercel makes money when you deploy, not when you deploy safely.

Maybe the answer is a pre-deployment checklist that blocks the deploy. A gate that says "prove your backups are isolated" and "prove your agent cannot touch production" before the CD pipeline fires. Maybe it is a certification, a badge, a community norm: "production verified," like the blue checkmark but for infrastructure.

Maybe it is just a rite of passage. Maybe everyone ships something that burns them once, and the ones who survive become the engineers the next startup hires.

I do not know. I know the checklist. I know it is not being delivered. And I know the next PocketOS is only a matter of time.

Refrences

PocketOS

- https://www.fastcompany.com/91533544/cursor-claude-ai-agent-deleted-software-company-pocket-os-database-jer-crane

- https://www.theregister.com/2026/04/27/cursoropus_agent_snuffs_out_pocketos/

Amazon Outages

- https://vibegraveyard.ai/story/amazon-ai-code-retail-outages/

- https://oecd.ai/en/incidents/2026-03-10-01aa

$6,000 Claude Loop

Veracode GenAI Code Security

- https://www.veracode.com/blog/genai-code-security-report/

- https://www.veracode.com/resources/analyst-reports/2025-genai-code-security-report/

Apiiro (Fortune 50 data)

- https://apiiro.com/blog/4x-velocity-10x-vulnerabilities-ai-coding-assistants-are-shipping-more-risks/

- https://www.theregister.com/2025/09/05/ai_code_assistants_security_problems/

Georgia Tech Vibe Security Radar

- https://scp.cc.gatech.edu/external-news/infosecurity-magazine-security-researchers-sound-alarm-vulnerabilities-ai-generated

- https://vibegraveyard.ai/story/georgia-tech-vibe-security-radar-ai-code-cves/

- https://www.infosecurity-magazine.com/news/ai-generated-code-vulnerabilities/

Slopsquatting

- https://www.startupdefense.io/blog/slopsquatting-ai-supply-chain-threat-startups

- https://www.aikido.dev/blog/slopsquatting-ai-package-hallucination-attacks

- https://en.wikipedia.org/wiki/Slopsquatting

- https://www.theregister.com/security/2024/03/28/ai-bots-hallucinate-software-packages-and-devs-download-them/

Lightrun 2026 Report

- https://venturebeat.com/technology/43-of-ai-generated-code-changes-need-debugging-in-production-survey-finds

- https://finance.yahoo.com/sectors/technology/articles/lightrun-2026-state-ai-powered-133300198.html

CSA Research Note (comprehensive summary)