From a Talk in Alexandria to Agentic AI: My Honest Ai journey 2024-2026

From a Talk in Alexandria to Agentic AI: My Honest Ai journey 2024-2026

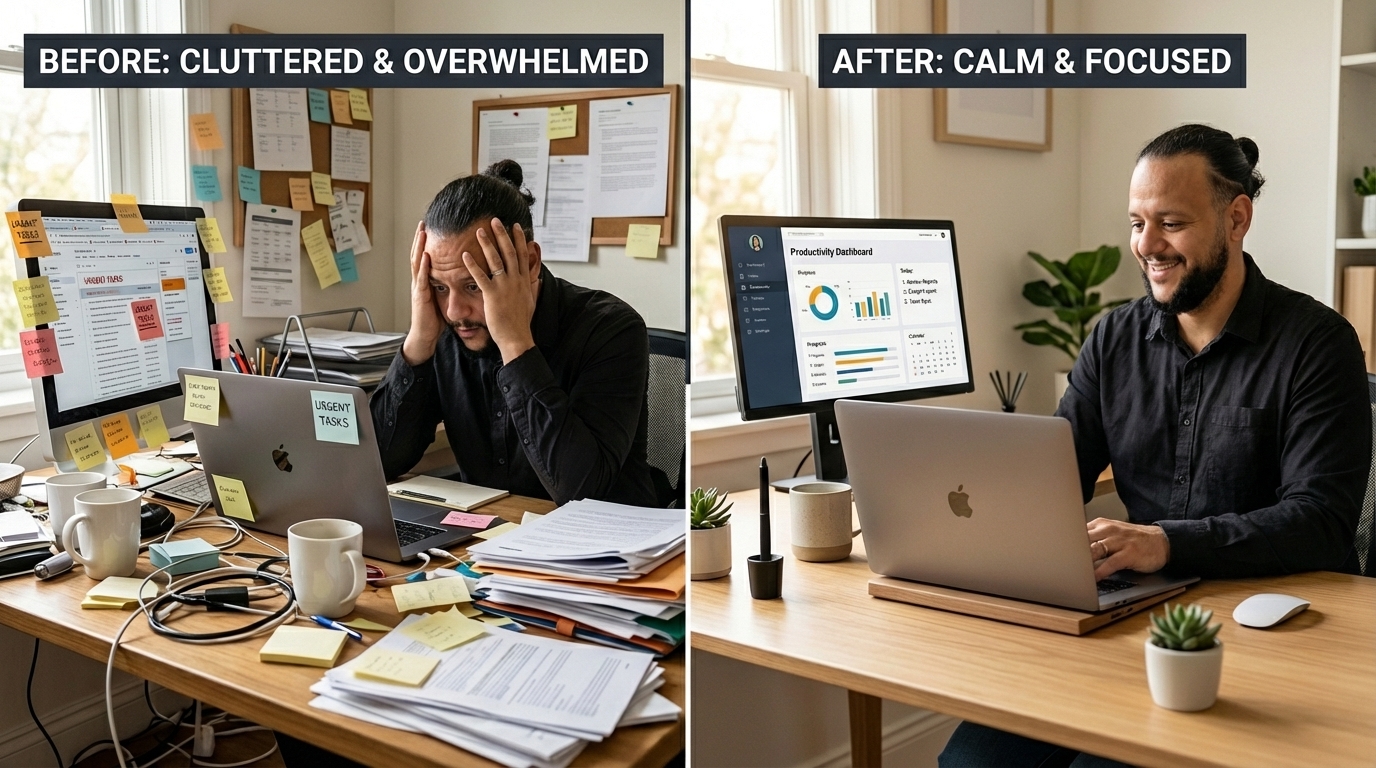

I had zero hands-on experience with generative AI. Twenty months later, I run agentic workflows, maintain my website with AI-powered IDEs, and my productivity has gone up by at least 10x on tasks that used to eat hours.

This post is about everything between those two points. The experiments that worked, the hardware that didn't, the money I spent, and the moment I realized I was looking in the wrong direction entirely.

Mid-2024: The Wake-Up Call in Alexandria

I was on my yearly vacation in Alexandria when I came across an event at the Bibliotheca Alexandrina. It was organized by Engineer Mohamed Hanafy, one of my former instructors at ITI where I studied software development back in 2010.

He went through a bunch of topics: what LLMs are, chatbots, automations, image creation, video editing, and how you can use all of that in marketing and content production. That lecture was a big eye-opener. I realized something huge was happening and I had been ignoring it.

I flew back to the Netherlands with one thought: I need to catch up. Fast.

September 2024: Becoming a One-Man Content Team

I needed a project to force myself to actually use AI tools, not just read about them. So I started a Facebook page with reels aimed at the Egyptian community in the Netherlands.

I used LeonardoAI for image generation, ChatGPT to help with content writing, and Photoroom to create thumbnails. The first few prompts were rough. You figure out what works when you need something specific and your third attempt still isn't getting you there. But the repetition made me better fast.

Why LeonardoAI and not Midjourney? Honestly: free credits. I bounce between the two depending on which one still has credits left. When LeonardoAI runs out, I switch to Midjourney. When Midjourney runs out, I switch back. Cheap thrills. It works.

The page got real traction. One video hit a few thousand views. But the part that surprised me most wasn't the numbers. It was that I was doing the work of an entire team by myself: idea generation, research, scripting, video recording, editing A-roll and B-roll, publishing, and promoting. One person, with AI handling the parts that would normally need a designer, a copywriter, and a video editor. That was the first time AI felt like a real multiplier, not a toy.

But I was hitting a ceiling. Everything ran through cloud APIs. I wanted to run models locally, try different things, go deeper. My old laptop wasn't going to cut it.

It was time to spend money.

Late 2024: The Hardware Rabbit Hole

Attempt #1: Jetson Orin Nano ($249)

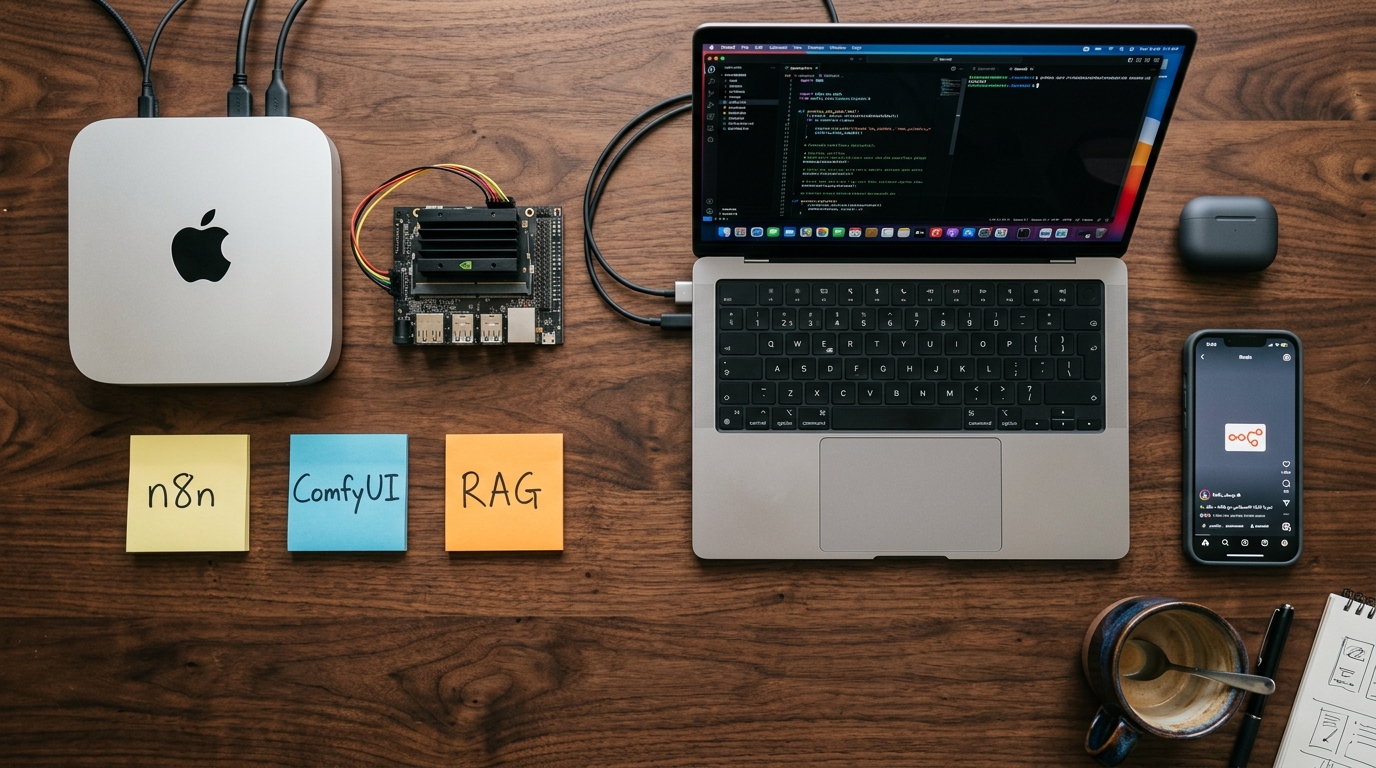

My first investment was the Jetson Orin Nano Super Developer Kit. I updated the firmware, got familiar with the 67 TOPS specs, and tried to understand the Nvidia ecosystem around AI on the edge.

I set up workflows with n8n and ComfyUI, worked with diffusion models and LoRA, tried TTS, and pulled several models from HuggingFace.

It was decent. But the truth is 8GB of GPU memory isn't enough. Even small models had performance issues. That was annoying. So I figured: maybe the problem is the device, not the idea.

Attempt #2: Mac Mini M4 (€700)

I went for a Mac Mini M4 with 16GB of shared memory. It was a step up. I installed Ollama, ComfyUI, and n8n, and things ran more smoothly than on the Jetson.

But the Mac Silicon chip brought its own problems. Stable Diffusion and Flux weren't running with GPU acceleration because they were built and optimized for Nvidia cards. I hit errors at the low level with PyTorch and TensorFlow that took real time to debug. Some things I just couldn't get working properly.

On the upside, I did real work with n8n on this machine. I built AI agent workflows, set up RAG pipelines, and connected multi-agent setups using MCPs. For quick prototypes, n8n was a win. It let me test ideas before investing time in building agentic flows with LangGraph or ADK.

On audio: I didn't want to spend money on an expensive microphone before I knew this content thing was going anywhere. So I used Adobe Podcast to enhance my voice recordings instead. It cleaned up the audio well enough that I didn't need to invest in hardware upfront. Eventually I did move to a Hollyland Lark wireless mic combined with AI enhancement, but Adobe Podcast bought me time to figure out if the investment was worth it first.

I also used ElevenLabs to create a voice clone of myself. That same "test it cheap before you buy" mindset applied here too.

And then I tried the thing I really wanted: running HunyuanVideo locally for video generation. The Mac Mini M4's GPU didn't have enough memory for it. That was the end of that experiment.

The Pattern Was Clear

Two hardware purchases. Around $1,000 total. Both hit their GPU memory limits too quickly for anything serious. Running models locally with decent results needs a major investment in hardware. I looked at what that would actually cost and decided: I'm done chasing local hardware.

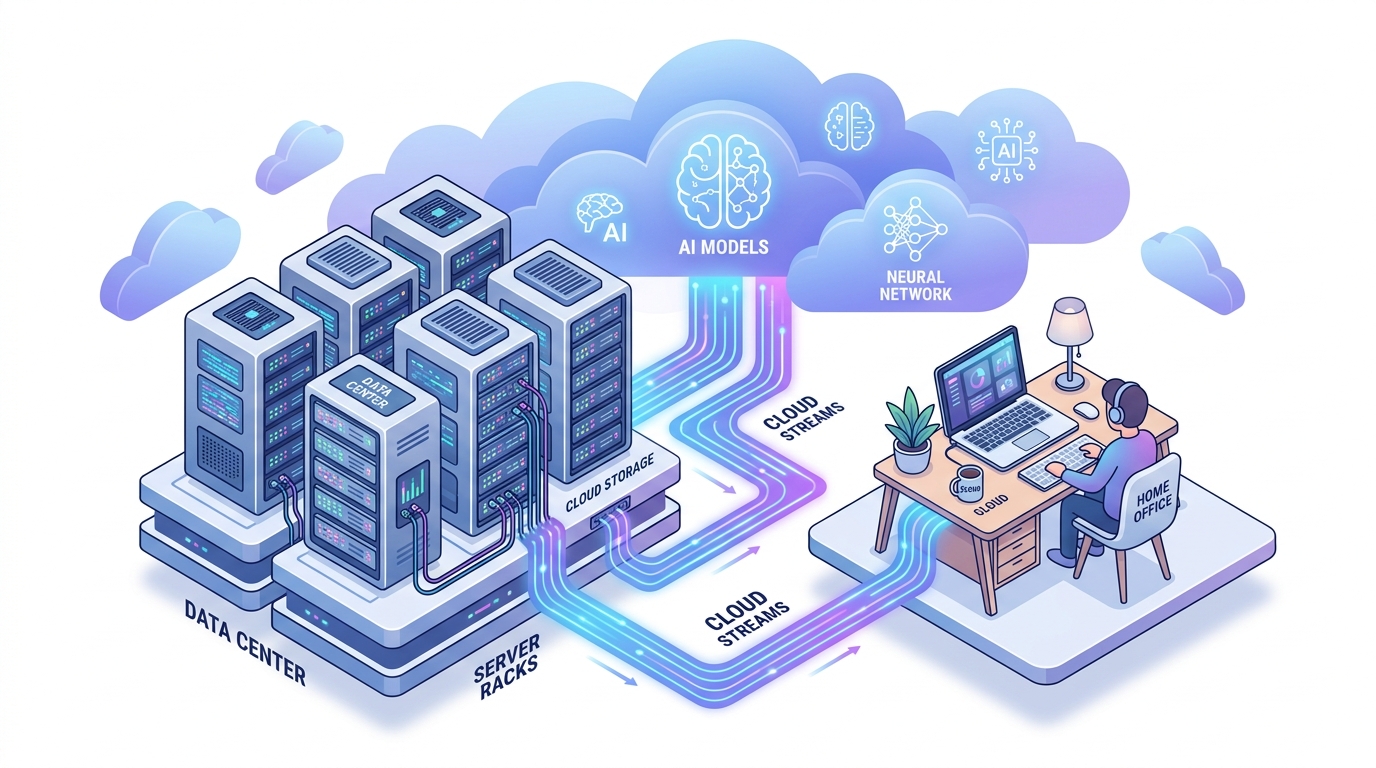

Early 2025: The Pivot to Cloud

That decision changed everything. Instead of fighting GPU limits, I pointed my n8n workflows at cloud APIs with real power behind them.

I started using Google AI Studio and Vertex AI Model Garden, but not just for Gemini. I wanted to explore heavy models, anything above 16B parameters, from different providers: Anthropic models, Chinese models, others. I wanted to connect my local n8n with the advanced reasoning you get from those cloud models. Suddenly, the same n8n setup that was struggling locally was doing serious work.

NoteLLM became my favorite out-of-the-box RAG tool. I used it for different things: preparing sources for my next YouTube video, summarizing study material. It just worked.

I also started writing content and making YouTube thumbnails with Canva and their embedded AI capabilities. Between Canva, NoteLLM, and cloud LLMs, I had a production setup that actually worked. No firmware updates, no PyTorch errors, no GPU memory limits.

Under $50 a month. Combined.

2025: Going Agentic

This is where it got interesting.

Browser and Computer Use

In early 2025, I tried agentic web scraping with Playwright. It was tedious. Getting reliable results meant writing brittle scripts, handling edge cases constantly, and spending more time debugging the automation than doing the actual work.

Then tools like Claude for Chrome and Chrome agentic mode showed up, and it was a completely different story. I compared them alongside Atlas, Comet, and Manus. After testing side by side, Chrome agentic mode turned out to be the most convenient option. You can actually get things done with it, especially for debugging and testing code on web apps. The gap between early 2025 Playwright scraping and where browser agents are now is massive.

Learning the Patterns

I spent time self-teaching agentic AI architecture and prompt writing best practices. It got serious enough that I recorded a YouTube video walking through several patterns: ReAct, Reflection, Planning, Tool Use, Multi-Agent Collaboration, Sequential Workflow, and Human-in-the-Loop.

Putting that video together forced me to organize what I'd learned into something I could actually explain, which is where the real understanding happens.

Building with AI-Powered IDE

On the code side, I used Replit to build the first version of my website. I now maintain it with Claude Code and Antigravity, Google's agentic IDE that competes directly with Claude Code. These tools changed how I ship code completely.

What Changed at Work

All this side exploration had a direct effect on how I work as a Staff Engineer. I became a better communicator. My writing is cleaner, my grammar is tighter, and I can produce documentation and technical specs with more clarity and speed than before.

Between prompting for content, code, and architecture work, I'd say my productivity went up by at least 10x on tasks that used to eat hours: drafting proposals, reviewing code, writing technical docs, preparing presentations. AI didn't just change what I build on the side. It changed how I work every day.

Where This Is Going

This whole journey has been less than 20 months. Looking back at what I've covered, it's a lot. And there's still a lot of tools and governance yet to come. Even the major players are shifting constantly.

I'm avoiding OpenAI for now. I don't like where the company is heading. Right now I have subscriptions on both GCP and Claude, just for personal use. Under $50 a month combined. The iterations and new models are coming faster and faster. It's a bit scary and exciting at the same time.

My focus is on going deeper with Claude eco system (skills, code, cli, cowork etc..).

If you're starting this journey, here's my one piece of advice: start with the big market players. Google, Anthropic, the ones with a full ecosystem. Even if they're a step behind on a specific benchmark, they give you the complete package: APIs, tooling, integrations, scale, and long-term support. AI wrappers and smaller tools are not doing well right now. The ones that survive are the ones backed by companies that can keep shipping. Go where the ecosystem is, not where the hype is and always keep morality in mind.

The pace of change isn't slowing down. Neither am I.