Your Coding Interview Is Measuring the Wrong Thing

Your Coding Interview Is Measuring the Wrong Thing

Three companies reached out to me for a senior IC engineering role.

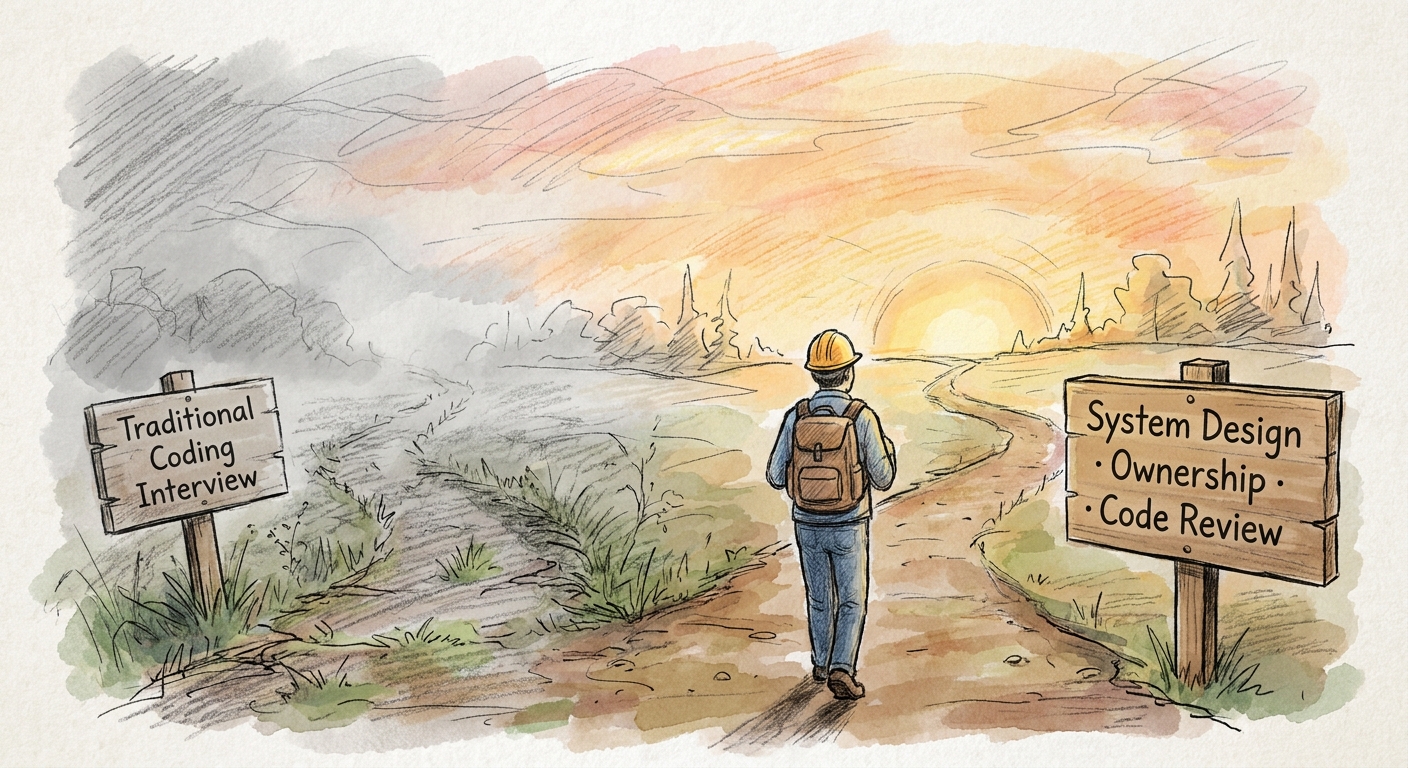

The first sent a full-app coding challenge. The second sent a leetcode link. The third scheduled a live system design session. No coding. No puzzles. Just a conversation about architecture, trade-offs, and ownership.

Only one was testing for the job that actually exists. The other two were testing skills Claude can pass in seconds.

1. The Interview That Tested the Actual Job

They asked me to walk through systems I had built. Not the code. The decisions. Why microservices here and not a monolith? What broke at scale? What did I learn from the last incident I owned?

The work day at this company for an IC looked like this: Grasp the needed domain context, let coding agents generate the code, inspect the agent's output, catch what it missed, ship. An IC had not originated a line of code from scratch in weeks. Not because s/he couldn't. Because writing code was no longer the highest-leverage thing s/he could do.

This is not a niche experience anymore. Gartner projects that 40% of enterprise applications will embed AI agents by the end of 2026, up from fewer than 5% in 2025. Anthropic's 2026 Agentic Coding Trends Report documents a fivefold increase in teams running five or more specialized coding agents concurrently. The shift is broad, fast, and already redefining what senior IC work looks like.

2. Code Review Got Harder Than Writing

Writing code is muscle memory. The logic forms in your head and your fingers execute patterns you have internalized over years.

Reading code you did not write, generated by an agent that does not share your mental model, is cognitively heavier. You reverse-engineer the reasoning from the output. You hold the system in your head and ask: does this actually fit? What assumptions did it make? What did it silently misunderstand?

Agents produce code that looks correct. It compiles. Tests pass. And it is wrong in a way only domain knowledge catches. Wrong internal service. A deprecated pattern. Three of four edge cases handled, the fourth silently dropped.

Spotting these things demands deeper understanding than writing the code yourself. Reviewing agent output is the hardest code review I have ever done. Prepfully's 2026 SWE interview rubric captures this shift: they now evaluate "judgment, problem solving under constraints, and communication" alongside coding ability. The thinking behind the code is what's scarce. The code itself is cheap.

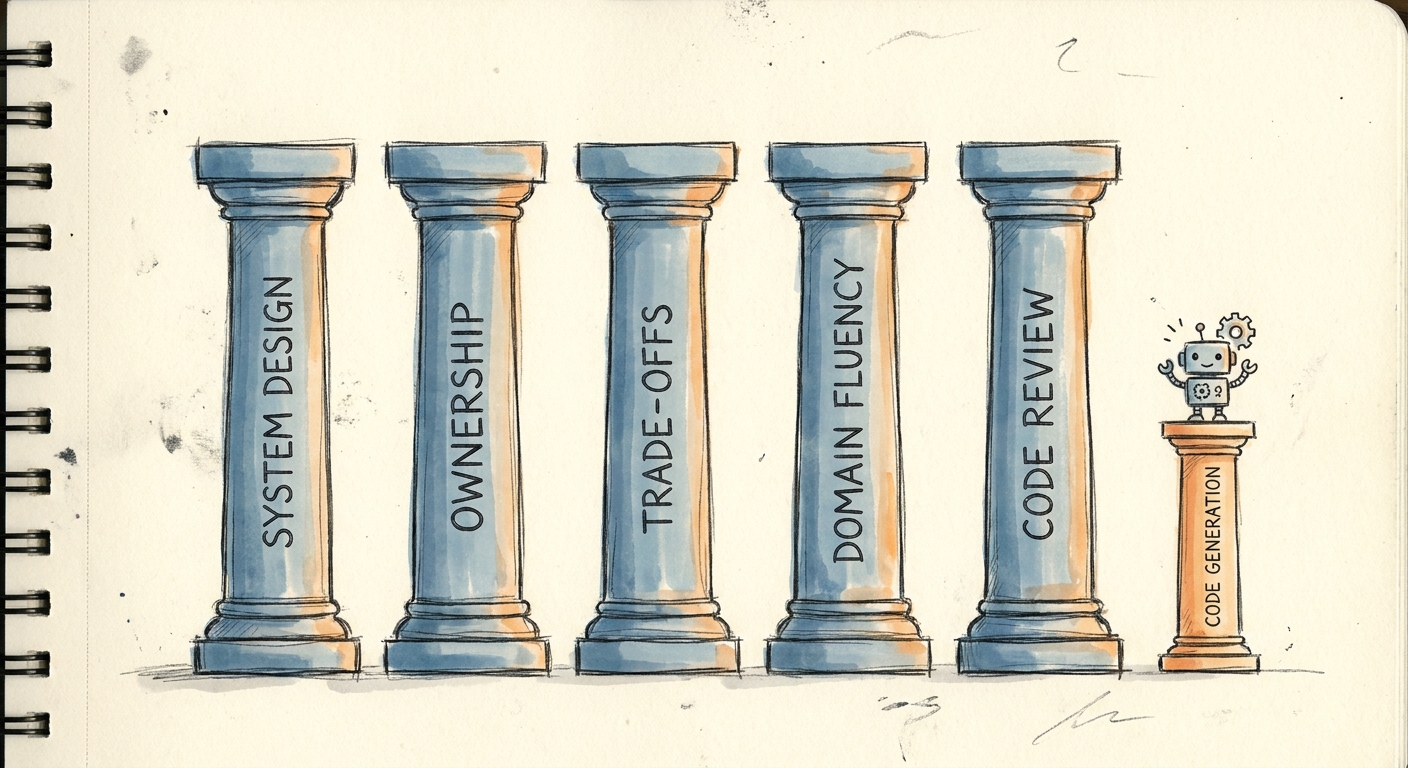

3. The Surviving Skills

When the code writes itself, the skills that survive are the ones that were always harder to learn.

System design intuition. Not knowing the syntax for a distributed lock. Knowing when you need one and when you don't. Knowing a bottleneck won't show up in benchmarks because it depends on a real-world access pattern the agent has never seen.

Ownership. Not "who wrote this bug." Who catches the edge case during review because they know the product, the user, and the failure mode from the last incident?

Trade-off fluency. Agents enumerate options. They cannot weigh them against your team, your timeline, your risk tolerance, your existing infrastructure. That judgment is yours.

Domain fluency. Not memorizing the codebase. Knowing the domain deeply enough to translate business needs into architecture. To write a prompt that captures the real constraint, not just the stated requirement. To inspect agent output and spot where it misunderstood the domain model. Agents can read your code. They cannot read your business.

4. To Companies Still Running Coding Interviews

If your senior IC hiring process still starts with a coding challenge, ask what you're actually measuring. Coding skills matter. You cannot review code you couldn't write yourself. The foundation is non-negotiable.

But the format is broken. The leetcode gauntlet. The algorithm puzzle under time pressure. The take-home that consumes a weekend. Any candidate can screenshot it, drop it into Claude, and pass in five seconds. You're measuring willingness to jump through hoops, not the ability to think about systems.

Try a code review session instead. Give them a real PR from your codebase, one that shipped with a subtle bug. Watch how they read it. What do they ask? What do they catch? Twenty minutes of that tells you more than any leetcode medium.

Or a refactoring discussion. Here is a real production module. It works but it's messy. What would you change? Where would you start? You're testing whether they can think about a system, not whether they memorized sorting algorithms.

Or a system design walkthrough. Not "design Twitter." Design something relevant to your product. Real constraints, real database choices, real trade-offs. Watch how they reason.

The job changed. The interview hasn't caught up yet.

5. What I'm Still Figuring Out

Scale. Does agent management work at fifty engineers? Five hundred? I haven't lived that yet.

Fair interviewing. The system design session worked for me. Is it reproducible across every candidate, every level, every domain? Unclear.

The role itself. Is "senior IC who reviews and ships through agents" the permanent shape, or a transitional one? Something is settling. It hasn't settled yet.

One of those three companies tested for the job that exists. The other two tested for a job that is disappearing. The distinction cost them candidates.

References

- GitHub, "Introducing Agent HQ," February 4, 2026. Unified multi-agent coding platform with enterprise governance.

- Gartner, AI Agent Adoption Forecast, 2026. Projects 40% of enterprise applications will embed AI agents by end of 2026, up from fewer than 5% in 2025.

- Anthropic, "2026 Agentic Coding Trends Report," 2026. Reports 5x increase in teams running five or more specialized coding agents concurrently.

- Prepfully, "Software Engineer Interview Rubric 2026," 2026. Notes evaluation shift toward judgment, problem solving under constraints, and communication.